Metaverse

AI models make stuff up. How can hallucinations be controlled? – Crypto News

There are kinder ways to put it. In its instructions to users, OpenAI warns that ChatGPT “can make mistakes”. Anthropic, an American AI company, says that its LLM Claude “may display incorrect or harmful information”; Google’s Gemini warns users to “double-check its responses”. The throughline is this: no matter how fluent and confident AI-generated text sounds, it still cannot be trusted.

Hallucinations make it hard to rely on AI systems in the real world. Mistakes in news-generating algorithms can spread misinformation. Image generators can produce art that infringes on copyright, even when told not to. Customer-service chatbots can promise refunds they shouldn’t. (In 2022 Air Canada’s chatbot concocted a bereavement policy, and this February a Canadian court has confirmed that the airline must foot the bill.) And hallucinations in AI systems that are used for diagnosis or prescription can kill.

All the leaves are brown

The trouble is that the same abilities that allow models to hallucinate are also what make them so useful. For one, LLMs are a form of “generative” AI, which, taken literally, means they make things up to solve new problems. They do this by producing probability distributions for chunks of characters, or tokens, laying out how likely it is for each possible token in its vocabulary to come next. The mathematics dictate that each token must have a non-zero chance of being chosen, giving the model flexibility to learn new patterns, as well as the capacity to generate statements that are incorrect. The fundamental problem is that language models are probabilistic, while truth is not.

This tension manifests itself in a number of ways. One is that LLMs are not built to have perfect recall in the way a search engine or encyclopedia might. Instead, because the size of a model is much smaller than the size of its training data, it learns by compressing. The model becomes a blurry picture of its training data, retaining key features but at much lower resolution. Some facts resist blurring—“Paris”, for example, may always be the highest-probability token following the words “The capital of France is”. But many more facts that are less statistically obvious may be smudged away.

Further distortions are possible when a pretrained LLM is “fine-tuned”. This is a later stage of training in which the model’s weights, which encode statistical relationships between the words and phrases in the training data, are updated for a specific task. Hallucinations can increase if the LLM is fine-tuned, for example, on transcripts of conversations, because the model might make things up to try to be interesting, just as a chatty human might. (Simply including fine-tuning examples where the model says “I don’t know” seems to keep hallucination levels down.)

Tinkering with a model’s weights can reduce hallucinations. One method involves creating a deliberately flawed model trained on data that contradict the prompt or contain information it lacks. Researchers can then subtract the weights of the flawed model, which are in part responsible for its output, from those of the original to create a model which hallucinates less.

It is also possible to change a model’s “temperature”. Lower temperatures make a model more conservative, encouraging it to sample the most likely word. Higher temperatures make it more creative, by increasing the randomness of this selection. If the goal is to reduce hallucinations, the temperature should be set to zero. Another trick is to limit the choice to the top-ranked tokens alone. This reduces the likelihood of poor responses, while also allowing for some randomness and, therefore, variety.

Clever prompting can also reduce hallucinations. Researchers at Google DeepMind found that telling an LLM to “take a deep breath and work on this problem step-by-step” reduced hallucinations and improved problem solving, especially of maths problems. One theory for why this works is that AI models learn patterns. By breaking a problem down into smaller ones, it is more likely that the model will be able to recognise and apply the right one. But, says Edoardo Ponti at the University of Edinburgh, such prompt engineering amounts to treating a symptom, rather than curing the disease.

Perhaps, then, the problem is that accuracy is too much to ask of LLMs alone. Instead, they should be part of a larger system—an engine, rather than the whole car. One solution is retrieval augmented generation (RAG), which splits the job of the AI model into two parts: retrieval and generation. Once a prompt is received, a retriever model bustles around an external source of information, like a newspaper archive, to extract relevant contextual information. This is fed to the generator model alongside the original prompt, prefaced with instructions not to rely on prior knowledge. The generator then acts like a normal LLM and answers. This reduces hallucinations by letting the LLM play to its strengths—summarising and paraphrasing rather than researching. Other external tools, from calculators to search engines, can also be bolted onto an LLM in this way, effectively building it a support system to enhance those skills it lacks.

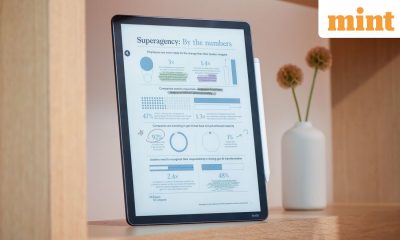

Even with the best algorithmic and architectural antipsychotics available, however, LLMs still hallucinate. One leaderboard, run by Vectara, an American software company, tracks how often such errors arise. Its data shows that GPT-4 still hallucinates in 3% of its summaries, Claude 2 in 8.5% and Gemini Pro in 4.8%. This has prompted programmers to try detecting, rather than preventing, hallucinations. One clue that a hallucination is under way lies in how an LLM picks words. If the probability distribution of the words is flat, ie many words have similar likelihoods of being chosen, this means that there is less certainty as to which is most likely. That is a clue that it might be guessing, rather than using information it has been prompted with and therefore “knows” to be true.

Another way to detect hallucination is to train a second LLM to fact-check the first. The fact-checker can be given the “ground truth” along with the LLM’s response, and asked whether or not they agree. Alternatively, the fact-checker can be given several versions of the LLM’s answer to the same question, and asked whether they are all consistent. If not, it is more likely to be a hallucination. NVIDIA, a chipmaker, has developed an open-source framework for building guardrails that sit around an LLM to make it more reliable. One of these aims to prevent hallucinations by deploying this fact-checking when needed.

Although such approaches can decrease the hallucination rate, says Ece Kamar, head of the AI frontiers lab at Microsoft, “it is unclear whether any of these techniques is going to completely get rid of hallucinations.” In many cases, that would be akin to self-sabotage. If an LLM is asked to generate ideas for a fantasy novel, for example, its output would be disappointing if limited to the world as it is. Consequently, says Dr Kamar, her research aims not to get rid of all hallucinations, but rather to stop the model from hallucinating when it would be unhelpful.

Safe and warm

The hallucination problem is one facet of the larger “alignment” problem in the field of AI: how do you get AI systems to reliably do what their human users intend and nothing else? Many researchers believe the answer will come in training bigger LLMs on more and better data. Others believe that LLMs, as generative and probabilistic models, will never be completely rid of unwanted hallucinations.

Or, the real problem might be not with the models but with its human users. Producing language used to be a uniquely human capability. LLMs’ convincing textual outputs make it all too easy to anthropomorphise them, to assume that LLMs also operate, reason and understand like humans do. There is still no conclusive evidence that this is the case. LLMs do not learn self-consistent models of the world. And even as models improve and the outputs become more aligned with what humans produce and expect, it is not clear that the insides will become any more human. Any successful real-world deployment of these models will probably require training humans how to use and view AI models as much as it will require training the models themselves.

Curious about the world? To enjoy our mind-expanding science coverage, sign up to Simply Science, our weekly subscriber-only newsletter.

© 2023, The Economist Newspaper Limited. All rights reserved. From The Economist, published under licence. The original content can be found on www.economist.com

-

Technology1 week ago

Technology1 week agoEinride Raises $100 Million for Road Freight Technology Solutions – Crypto News

-

Blockchain4 days ago

Blockchain4 days agoIt’s About Trust as NYSE Owner, Polymarket Bet on Tokenization – Crypto News

-

Cryptocurrency1 week ago

Cryptocurrency1 week agoBitcoin’s rare September gains defy history: Data predicts a 50% Q4 rally to 170,000 dollars – Crypto News

-

Technology1 week ago

Technology1 week agoCAKE eyes 60% rally as PancakeSwap hits $772B trading all-time high – Crypto News

-

others1 week ago

Japan Foreign Investment in Japan Stocks rose from previous ¥-1747.5B to ¥-963.3B in September 26 – Crypto News

-

others1 week ago

others1 week agoUSD/JPY returns below 147.00 amid generalized Dollar weakness – Crypto News

-

others1 week ago

others1 week agoUK firms’ inflation expectations seen higher at 3.5% in the September quarter – Crypto News

-

Business1 week ago

REX-Osprey Files For ADA, HYPE, XLM, SUI ETFs as Crypto ETF Frenzy Heats Up – Crypto News

-

Blockchain1 week ago

Blockchain1 week agoMetaplanet Expands Bitcoin Holdings To Over 30K BTC – Details – Crypto News

-

others1 week ago

others1 week agoEUR/GBP weakens as Eurozone inflation holds steady, UK PMI stabilizes – Crypto News

-

Cryptocurrency1 week ago

Cryptocurrency1 week agoThe factors set to spur another ‘Uptober’ for BTC – Crypto News

-

Blockchain1 week ago

Blockchain1 week agoUSDT, USDC Dominance Falls To 82% Amid Rising Competition – Crypto News

-

Cryptocurrency1 week ago

Cryptocurrency1 week agoBREAKING: Bitcoin Reclaims $120K. Is ATH Next? – Crypto News

-

others1 week ago

Fed’s Lorie Logan Urges Caution on Further Rate Cuts Citing Inflation Risks – Crypto News

-

Business1 week ago

Nasdaq-Listed Fitell Adds Pump.fun’s PUMP To Supplement Solana Treasury – Crypto News

-

Cryptocurrency7 days ago

Cryptocurrency7 days agoPrivate Key Leakage Remains the Leading Cause of Crypto Theft in Q3 2025 – Crypto News

-

Technology6 days ago

Technology6 days agoWhat Arattai, Zoho’s homegrown messaging app offers: Key features, how to download, top FAQs explained – Crypto News

-

Blockchain1 week ago

Blockchain1 week agoTapzi Presale Offers Potential 185% Returns as Crypto Markets Rally – Crypto News

-

others1 week ago

Japan Tankan Non – Manufacturing Outlook registered at 28, below expectations (29) in 3Q – Crypto News

-

others1 week ago

BONK Price Rally Ahead? Open Interest Jumps as TD Buy Signal Flashes – Crypto News

-

Metaverse1 week ago

Metaverse1 week agoAmazon is overhauling its devices to take on Apple in the AI era – Crypto News

-

Blockchain1 week ago

Blockchain1 week agoUAE Regulator Clamps Down on Farmland Used for Crypto Mining – Crypto News

-

Technology1 week ago

XRP Ledger Rolls Out MPT Standard for Real-World Asset Tokenization – Crypto News

-

Technology1 week ago

Technology1 week agoXbox Game Pass Ultimate gets steep 50% hike as Microsoft reworks subscription plans – Crypto News

-

De-fi1 week ago

De-fi1 week ago1inch Brings DeFi Swaps to Coinbase App – Crypto News

-

Business1 week ago

October Fed Rate Cut Odds Rise After Weak U.S. Labor Data, Bitcoin Surges – Crypto News

-

Metaverse1 week ago

Metaverse1 week agoBlackRock launches AI tool for financial advisors. Its first client is a big one. – Crypto News

-

Blockchain1 week ago

Blockchain1 week agoWLFI and the Trump connection, opportunity or just hype? – Crypto News

-

Blockchain1 week ago

Blockchain1 week agoRobinhood CEO Says Asset Tokenization ‘Can’t Be Stopped’ – Crypto News

-

Technology1 week ago

Tech Giant Samsung Taps Coinbase To Provide Crypto Access, Driving Adoption – Crypto News

-

others1 week ago

Bitget Joins UNICEF Game Jam To Train 300,000 Youths In Blockchain – Crypto News

-

Cryptocurrency1 week ago

Cryptocurrency1 week agoA Complete Guide for Beginners – Crypto News

-

Cryptocurrency1 week ago

Cryptocurrency1 week agoXRP and DOGE ETFs Push $500 Million Milestone for U.S. Investment Fund – Crypto News

-

Technology1 week ago

Technology1 week agoGemini Nano Banana hacks: How to make AI-powered handwritten Diwali 2025 invites, reveals Google – Crypto News

-

Technology1 week ago

Expert Predicts SHIB Rally as Shiba Inu Restores Shibarium After $4M Hack Shutdown – Crypto News

-

Cryptocurrency7 days ago

Cryptocurrency7 days agoPrivate Key Leakage Remains the Leading Cause of Crypto Theft in Q3 2025 – Crypto News

-

Technology5 days ago

Technology5 days agoDiwali bonanza: iPhone 16 Pro Max price crashes by up to ₹55,000 on Flipkart – Don’t miss out! – Crypto News

-

Cryptocurrency1 week ago

Google Cloud to Run Validators for Midnight – Charles Hoskinson Confirms Partnership – Crypto News

-

De-fi1 week ago

De-fi1 week agoGENIUS Act Targets Stablecoin Yield, But Workarounds Could Keep Returns Alive – Crypto News

-

others1 week ago

DEPIN Project Spacecoin Executes First Blockchain Transaction in Low Earth Orbit – Crypto News

-

Technology1 week ago

Technology1 week agoAmazon Great Indian Festival brings unbeatable offers on gaming consoles: Up to 75% off top picks for gaming enthusiasts – Crypto News

-

Cryptocurrency1 week ago

Cryptocurrency1 week agoIs Today’s $165B Crypto Market Rally The Start of a Massive Bull Run? – Crypto News

-

Technology1 week ago

ASTER Deposits Flows Into Binance Wallets Following CZ Endorsement, Listing Incoming? – Crypto News

-

Technology1 week ago

Technology1 week agoMorgan Stanley’s Tech Boss Says AI Coding Has ‘Profound’ Impact – Crypto News

-

others1 week ago

others1 week agoCurrent interest rate level is very appropriate – Crypto News

-

Technology1 week ago

Technology1 week agoBoom or bubble: How long can the AI investment craze last? – Crypto News

-

others1 week ago

others1 week agoPound Sterling trades firmly against Greenback on slowing US job demand – Crypto News

-

Technology1 week ago

Breaking: CME to Launch 24/7 Crypto Futures Trading Amid Rising Institutional Demand – Crypto News

-

Blockchain1 week ago

Blockchain1 week agoETHZilla CEO Predicts Ethereum as Future of Finance – Crypto News

-

others1 week ago

others1 week agoEUR/USD remains bid as investors ramp up bets of Fed rate cuts – Crypto News